When you recall an item from memory, a prompt usually brings associated parts of that item into mind. Could this process be the same thing that occurs when you search for a visual target?

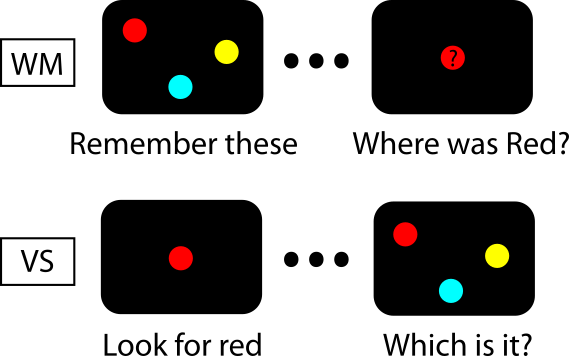

We tested a neural model designed to perform working memory tasks, to see if it could also perform visual search.

The model retrieves information when a partial cue re-activates a pattern of neurons by associative pattern completion. This same process could occur when we look for an item that we have in mind: the target in mind corresponds to information in memory, and the display that must be searched corresponds to a (complicated) retrieval cue.

We found that when pattern completion is applied to the search display, the memory item “amplifies” matching features in the display. Remarkably, this reproduces a number of classical findings in the visual search literature.

Importantly, the model also gets some things wrong. This shows us that there are certain mechanistic differences between memory and search.

“A common neural network architecture for visual search and working memory”, Bocincova et al., Visual cognition (2020)

Leave a Reply

You must be logged in to post a comment.